Google’s AI research lab DeepMind has announced that in partnership with Google Cloud, it’s launching a beta version of SynthID, a tool for watermarking and identifying AI-generated images.

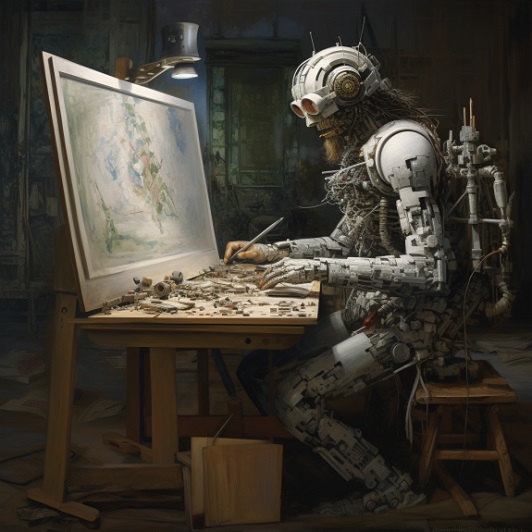

The AI Image Challenge

Generative AI technologies are rapidly evolving, and AI-generated imagery, also known as ‘synthetic imagery,’ is becoming much harder to distinguish from images not created by an AI system. Many AI generated images are now so good that they can easily fool people, and there are now so many (often free) AI image generators around and being widely used that misuse is becoming more common.

This raises a host of ethical, legal, economic, technological, and psychological concerns ranging from the proliferation of deepfakes that can be used for misinformation and identity theft, to legal ambiguities around intellectual property rights for AI-generated content. Also, there’s potential for job displacement in creative fields as well as the risk of perpetuating social and algorithmic biases. The technology also poses challenges to our perception of reality and could erode public trust in digital media. Although the synthetic imagery challenge calls for a multi-disciplinary approach to tackle it, many believe a system such as ‘watermarking’ may help in terms of issues like ownership, misuse, and accountability.

What Is Watermarking?

Creating a special kind of watermark for images to identify them as being AI-produced is a relatively new idea, but adding visible watermarks to images is a method that’s been used for many years (to show copyright and ownership) on sites including Getty Images, Shutterstock, iStock Photo, Adobe Stock and many more. Watermarks are designs that can be layered on images to identify them. Images can have visible or invisible, reversable, or irreversible watermarks added to them. Adding a watermark can make it more difficult for an image to be copied and used without permission.

What’s The Challenge With AI Image Watermarking?

AI-generated images can be produced on-the-fly and customised and can be very complex, making it challenging to apply a one-size-fits-all watermarking technique. Also, AI can generate a large number of images in a short period of time, making traditional watermarking impractical, plus simply adding visible watermarks to areas of an image (e.g. the extremities) means it could be cropped and the images can be edited to remove it.

Google’s SynthID Watermarking

Google SynthID tool works with Google Cloud’s ‘Imagen’ text-to-image diffusion model (AI text to image generator) and uses a combined approach of being able to add and detect watermarks. For example, the SynthID watermarking tool can add an imperceptible watermark to synthetic images produced by Imagen, doesn’t compromise image quality, and allows the watermark to remain detectable, even after modifications (e.g. the addition of filters, changing colours, and saving with various lossy compression schemes – most commonly used for JPEGs). SynthID can also be used to scan an image for its digital watermark and can assess the likelihood of an image being created by Imagen and provides the user with three confidence levels for interpreting the results.

Based On Metadata

Adding Metadata to an image file (e.g. who created it and when), plus adding digital signatures to that metadata can show if an image has been changed. Where metadata information is intact, users can easily identify an image, but metadata can be manually removed when files are edited.

Google says the SynthID watermark is embedded in the pixels of an image and is compatible with other image identification approaches that are based on metadata and, most importantly, the watermark remains detectable even when metadata is lost.

Other Advantages

Some of the other advantages of the SynthID watermark addition and detection tool are:

– Images are modified so as to be imperceptible to the human eye.

– Even if an image has been heavily edited and the colour, contrast and size changed, the DeepMind technology behind the tool will still be able to tell if an imaged is AI-generated.

Part Of The Voluntary Commitment

The idea of watermarking to expose and filter AI-generated images falls within the commitment of seven leading AI companies (Amazon, Anthropic, Google, Inflection, Meta, Microsoft, and OpenAI) who recently committed to developing AI safeguards. Part of the commitments under the ‘Earning the Public’s Trust’ heading was to develop robust technical mechanisms to ensure that users know when content is AI generated, such as a watermarking system, thereby enabling creativity AI while reducing the dangers of fraud and deception.

What Does This Mean For Your Business?

It’s now very easy for people to generate AI images with any of the AI image generating tools available, with many of these images able to fool the viewer possibly resulting in ethical, legal, economic, political, technological, and psychological consequences. Having a system that can reliably identify AI-generated images (even if they’ve been heavily edited) is therefore of value to businesses, citizens, and governments.

Although Google admits its SynthID system is still experimental and not foolproof, it at least means something fairly reliable will be available soon at a time when AI seems to be running ahead of regulation and protection. One challenge, however, is that although there is a general commitment by the big tech companies to watermarking, the SynthID tool is heavily linked to Google’s DeepMind, Cloud and Imagen and other companies may also be pursuing different methods. I.e. there may be a lack of standardisation.

That said, it’s a timely development and it remains to be seen how successful it can be and how watermarking and/or other methods develop going forward.